Neural Texture Compression: How Intel’s TSNC Changes the Game for GPU Memory

Neural texture compression is quietly becoming one of the most important innovations in modern graphics technology. As games grow heavier and textures become more detailed, the pressure on VRAM and storage continues to rise. And instead of simply increasing hardware requirements, companies are starting to rethink how data is handled in the first place.

Intel’s latest development, Texture Set Neural Compression (TSNC), is a clear sign of that shift. It’s not just about squeezing files smaller—it’s about fundamentally changing how textures are stored, streamed, and reconstructed in real time.

This fundamental shift in data handling is crucial for optimizing game performance, but it's also important to consider other factors that impact how games run, such as how Denuvo affects Resident Evil Requiem Performance: Cracked Version vs Denuvo Benchmark Results.

Table of Contents

The Rise of Neural Texture Compression

The idea of using neural networks to compress textures isn’t entirely new. NVIDIA recently showcased its own approach, proving that AI can significantly reduce texture sizes while maintaining visual fidelity.

But what’s interesting is how quickly this concept is evolving into a broader industry direction. Intel’s entry into the space suggests that neural compression isn’t just experimental anymore—it’s becoming a competitive frontier.

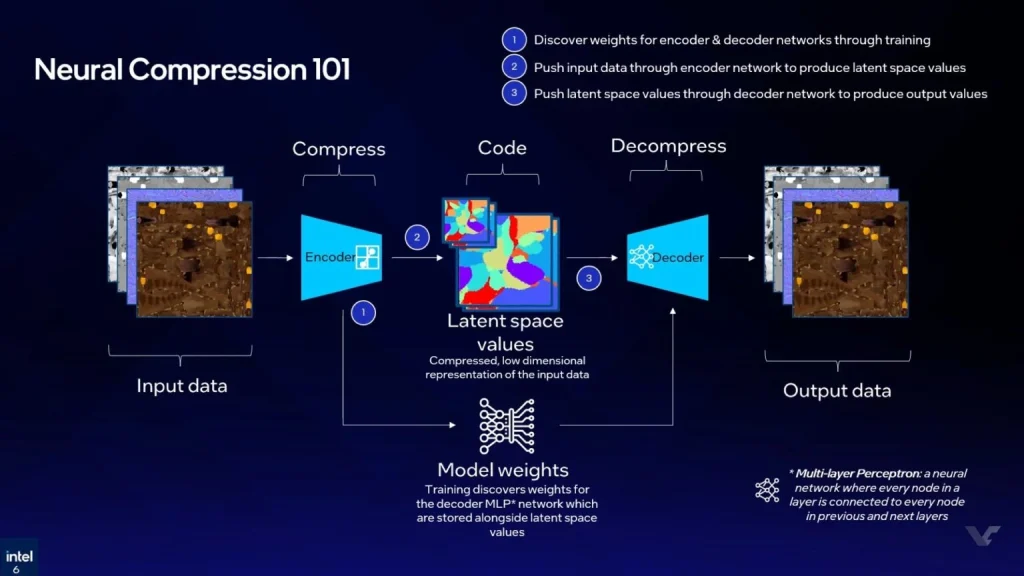

Instead of relying on traditional block compression methods, neural approaches train models to “understand” texture data. This allows them to reconstruct images more efficiently, often achieving far higher compression ratios than legacy techniques.

What Is Intel TSNC and How It Works

Intel’s Texture Set Neural Compression (TSNC) was first introduced during GDC 2025 as part of Intel Labs’ research efforts. Since then, the project has evolved into a standalone SDK, complete with a dedicated API for decompression that supports C, C++, and HLSL.

At its core, TSNC uses neural networks to encode and decode texture sets. These models run with hardware acceleration via Intel’s XMX units on supported GPUs, making the process practical for real-world applications rather than just theoretical demos.

What makes this particularly compelling is how adaptable the system is. Developers aren’t locked into a single workflow—they can choose when and how decompression happens. Whether it’s during installation, at load time, or even dynamically during streaming, TSNC offers flexibility that traditional methods simply don’t.

Flexibility in Real-World Use Cases

One of the understated strengths of TSNC is how configurable it is. Developers can prioritize different goals depending on their needs.

If storage space is the main concern, textures can remain heavily compressed on disk. If VRAM is the bottleneck, decompression strategies can be adjusted to optimize runtime memory usage instead.

This opens up interesting possibilities, especially for large-scale games or systems with limited hardware resources. Instead of designing around strict memory constraints, developers can dynamically balance between storage, performance, and quality.

In practical terms, this could mean fewer compromises in texture detail—or smoother experiences on lower-end GPUs.

Two Modes: Quality vs Maximum Compression

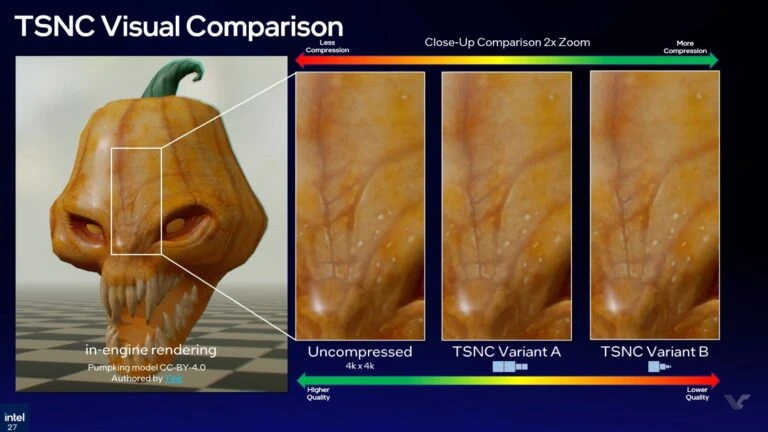

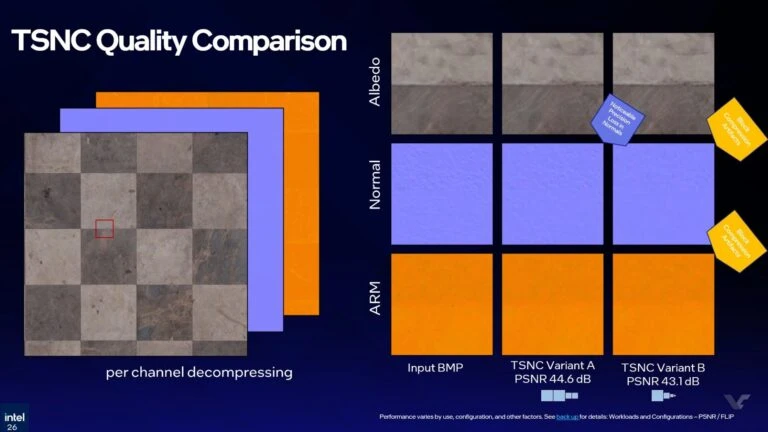

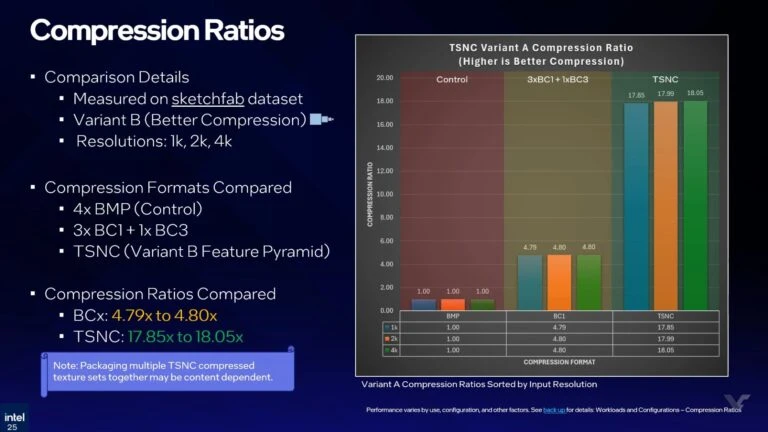

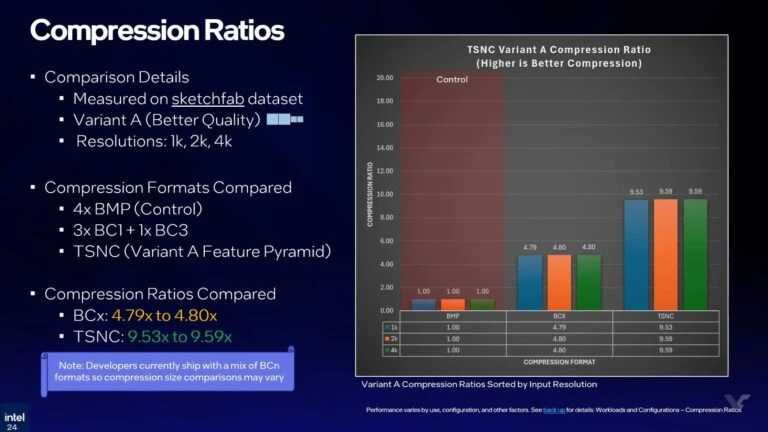

Intel’s TSNC operates in two primary modes: Variant A and Variant B. Each one reflects a different philosophy.

Variant A focuses on preserving higher visual quality while still delivering strong compression. Variant B, on the other hand, pushes compression to its limits, prioritizing minimal data size over fidelity.

The numbers are where things get interesting. Traditional block compression methods typically achieve around a 4.8× reduction in texture size. TSNC’s Variant A improves that to roughly 9×, while Variant B can reach up to an impressive 18× compression ratio.

That kind of leap isn’t incremental—it’s transformative. It suggests a future where massive texture libraries can exist without overwhelming system memory.

Performance Trade-Offs and Industry Context

Of course, there’s no such thing as free optimization. Neural texture compression introduces a new trade-off: compute power for memory savings.

In similar implementations, such as NVIDIA’s approach, performance impact can vary significantly—from minor slowdowns to more noticeable drops depending on resolution and mode.

But here’s the nuance. If a system is already limited by VRAM, reducing memory pressure can actually improve overall performance. In other words, a slight computational overhead might lead to higher FPS simply because the GPU is no longer bottlenecked by memory constraints.

This is why neural compression isn’t just about efficiency—it’s about balance. It gives developers another lever to pull, one that can reshape how performance is optimized across different hardware configurations.

Final thoughts

What Intel is building with TSNC feels less like a single feature and more like part of a broader shift in graphics technology. As AI continues to integrate deeper into rendering pipelines, techniques like neural texture compression may soon become standard rather than experimental.

And if that happens, the way we think about textures, memory, and performance could change entirely.

Source: videocardz