Neural Texture Compression by NVIDIA: A Quiet Revolution in Game Rendering

When NVIDIA talks about neural rendering, most people immediately think about DLSS. It’s the flashy feature—the one that boosts FPS and gets all the headlines. But beneath that surface, something arguably more transformative is happening. And it’s far less visible, yet potentially far more impactful.

At GDC, NVIDIA pulled back the curtain on a different side of neural rendering—one that doesn’t just enhance frames after they’re rendered, but fundamentally changes how those frames are built in the first place.

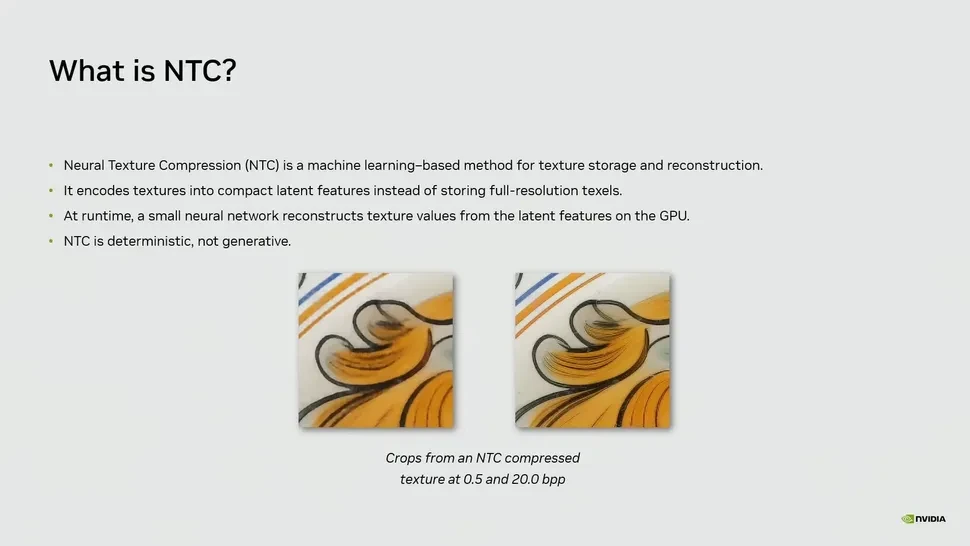

This is where Neural Texture Compression (NTC) comes in.

While NVIDIA is revolutionizing PC game rendering with neural technologies like DLSS and NTC, console gamers can also look forward to exciting AI-driven visual upgrades, which you can learn more about with the PSSR 2.0 Games List for PS5 Pro: Full Guide to Supported Titles & Upgrades.

Table of Contents

Beyond DLSS 5: What Neural Rendering Really Means

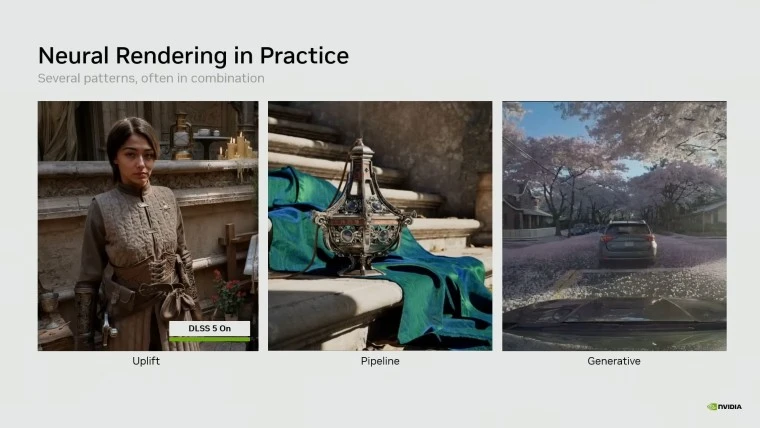

DLSS 5, introduced earlier at GTC 2026, focuses on reconstructing and enhancing already rendered images. It’s impressive, no doubt. But it’s also just one piece of a much larger puzzle.

The GDC presentation shifted attention to something deeper: embedding small neural networks directly into the rendering pipeline itself. Instead of treating AI as a post-processing layer, NVIDIA is integrating it into the core logic of how textures, materials, and even lighting data are handled.

This shift matters. Because once AI becomes part of the pipeline—not just an add-on—it starts influencing everything: memory usage, asset complexity, and even how games are built from the ground up.

How Neural Texture Compression Slashes VRAM Usage

Let’s talk about the most striking part of the demo.

In a scene called Tuscan Wheels, NVIDIA compared traditional texture compression (BCn formats) with their new Neural Texture Compression. The results weren’t subtle—they were dramatic.

VRAM usage dropped from 6.5 GB to just 970 MB.

And here’s the surprising part: visual quality remained very close to the original.

Instead of storing full-resolution textures, NTC encodes them into compact latent representations. Then, at runtime, a small neural network reconstructs the texture directly on the GPU. It’s not generating new content—it’s decoding compressed data in a smarter way.

What this effectively means is that textures are no longer static assets in the traditional sense. They become something closer to compressed intelligence—data that needs to be interpreted rather than simply read.

Even more interesting, NVIDIA claims that with the same memory budget, NTC can preserve more detail than conventional block compression.

So this isn’t just about saving space. It’s about using that space more efficiently.

And the implications ripple outward quickly:

- Smaller game sizes

- Faster downloads and patches

- Lower bandwidth usage

- More room for higher-quality assets on the same hardware

This is the kind of optimization that players don’t immediately notice—but developers absolutely do.

Neural Materials: Rethinking How Surfaces Are Stored

Textures are only part of the story.

Another piece NVIDIA highlighted is something called Neural Materials. Traditionally, rendering a surface requires multiple textures and complex calculations based on BRDF (Bidirectional Reflectance Distribution Function). It’s accurate—but also heavy.

NVIDIA’s approach compresses the behavior of materials into a compact latent format, similar to what they’re doing with textures. A neural network then reconstructs how that material should look under lighting conditions.

In their demo, a material setup with 19 channels was reduced to just 8, while rendering performance at 1080p improved by up to 1.4x to 7.7x.

That’s not a small optimization—it’s a redefinition of how materials are handled.

Instead of storing exhaustive data about every surface property, you store a compressed representation of how the material behaves. The GPU, assisted by AI, does the rest in real time.

It’s a subtle shift in philosophy: from storing everything explicitly to storing just enough and reconstructing the rest intelligently.

Why This Matters More Than You Think

There’s been a lot of debate around AI in graphics—especially with concerns about “AI-generated artifacts” or the so-called loss of artistic intent. DLSS sometimes sits at the center of that discussion.

But technologies like Neural Texture Compression and Neural Materials operate differently. They’re not trying to change the look of the final image. They’re trying to optimize how that image is built.

And that distinction is important.

Because in the long run, performance and memory efficiency are just as critical as visual fidelity—if not more. As games grow in size and complexity, VRAM becomes one of the biggest bottlenecks, especially on mid-range hardware.

Reducing memory usage by up to 85% without sacrificing quality isn’t just an improvement—it’s a paradigm shift.

It opens the door to:

- More detailed worlds on existing GPUs

- Better scalability across different hardware tiers

- Reduced development constraints for artists and designers

In a way, this is the kind of innovation that doesn’t scream for attention—but quietly reshapes the industry.

And years from now, we might look back and realize that this—not DLSS—was the moment neural rendering truly changed everything.

Source: tomshardware