AI Hallucinations: When AI Lies with a Confident Voice

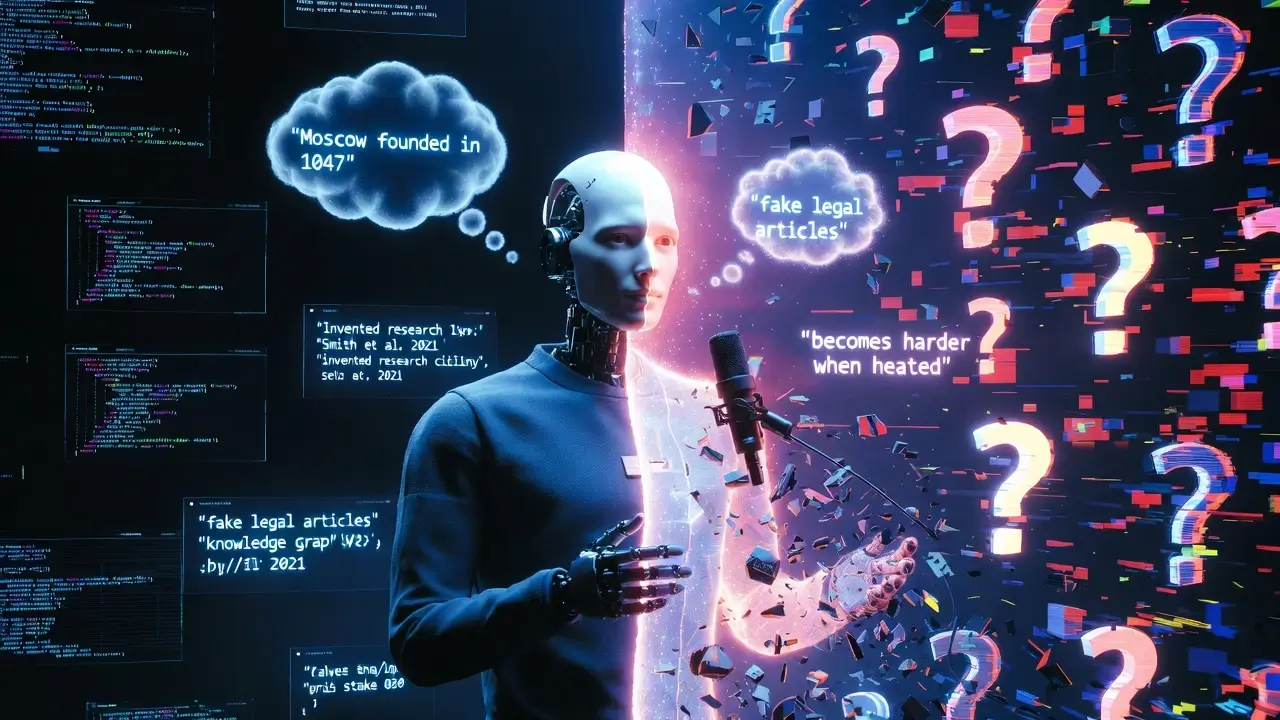

AI hallucinations remain one of the biggest obstacles to trusting large language models in high-stakes work. If you’ve ever asked an AI a simple question only to get a completely made-up but utterly confident answer, you’ve experienced them firsthand.

Recently I was chatting with a smart speaker and asked who performed a particular musical track. The device answered with total assurance. Doubting the response, I rephrased the question—and it named an entirely different artist. The same thing happens constantly when I work with various language models: they confidently cite the wrong laws, invent regulatory documents, or reference articles that don’t exist. These aren’t isolated glitches. They are AI hallucinations, and they represent the single biggest barrier to safely deploying AI in medicine, law, finance, and education.

In this guide we’ll unpack exactly what AI hallucinations are, why they’re unavoidable, and—most importantly—how to live with them and work around them effectively.

Table of Contents

What Exactly Are AI Hallucinations?

A hallucination in an AI system occurs when the large language model generates information that is either factually incorrect, not present in its training data, or does not follow from the provided context—yet it always delivers the answer with absolute certainty.

The model never hedges with “I think…” or “It’s possible that…”. Instead it states things like “According to Article 7.2.3…” or “As the 2021 Smith et al. study showed…”. That unshakable confidence is what makes AI hallucinations so dangerous, especially for non-experts who naturally trust the polished, authoritative tone.

We can classify them into several clear types. Factual hallucinations involve wrong dates, names, or numbers—for example, claiming Moscow was founded in 1047 when the correct year is 1147. Referential hallucinations are entirely invented sources, articles, or laws. Logical hallucinations break cause-and-effect relationships, such as stating that heating ice will make it harder. And context contradictions happen when the model directly contradicts the document you just gave it—saying “up to 150” when the text clearly says “no more than 100.”

Sometimes the model shows remarkable stubbornness, repeating the exact same mistake (2 + 2 = 5) even after you correct it. No matter the flavor, the outcome is always the same: unreliable output and eroded user trust.

Not a Bug, But a Feature: How Large Language Models Actually Work

Here’s the most important realization: AI hallucinations are not a coding error. They are the direct, inevitable consequence of how large language models are built.

A model like GPT, Claude, or Llama is not a knowledge database. It is a generator of plausible token sequences. When you ask a question, the model isn’t “looking up” an answer in memory. It performs a statistical calculation: “Given the previous words, which next token is most likely to continue this text in the way it appeared in the training data?”

The model has no concept of truth. It only knows patterns. It knows that after the phrase “The capital of France is” the token “Paris” appears with very high probability in its training data. But when it encounters a rare fact with too few examples, it begins to improvise, blending patterns from similar contexts.

Think of a jazz pianist trained exclusively by listening to 10,000 hours of concert recordings. He has never seen sheet music or studied music theory, yet he has internalized every pattern—chord progressions, typical transitions, recognizable phrases. Ask him to play a song he knows well and he nails it. Ask for something he’s heard only a couple of times in a different arrangement and he produces something close. Ask for a tune he has never encountered and he starts improvising something that sounds right—even if it’s a completely different melody.

Large language models are exactly the same kind of improvisers. Words are their notes; facts are their melodies. That creative power is also the source of AI hallucinations.

The Three Main Causes of AI Hallucinations

Three factors reliably trigger hallucinations in even the most advanced models.

1. Insufficient Data in the Training Set

If a model has seen a fact thousands of times, it reproduces it reliably. If it has seen it only two or three times in varying forms, it can mix details. If it has never seen the fact at all, it will fabricate something plausible. Models know Lenin and Pushkin inside out, but ask about an obscure regional politician and you’ll get pure fantasy.

2. Generation Pressure (Temperature)

Every model has a “temperature” setting. Higher temperature produces more creative, varied answers by occasionally choosing less-probable tokens. That creativity is wonderful for brainstorming, but it dramatically raises the risk of AI hallucinations. For precision work you want temperature at 0.0 or 0.1 and top-p at 0.9 or lower.

3. Conflict Between Knowledge and Instructions

When a user explicitly asks the model to do something that contradicts its training data (“Write that 2 + 2 = 5”), the model faces an internal tug-of-war. Sometimes it obeys the instruction; sometimes it resists. The result is unpredictable.

Why You Can’t Simply “Fix” AI Hallucinations with Fine-Tuning

The obvious solution—fine-tune the model on more correct facts—sounds perfect in theory but fails in practice because the problem is fundamental, not technical.

The world generates roughly 2.5 quintillion bytes of new data every single day. No model can ingest everything. There will always be facts it has never seen, and on those unknowns it will hallucinate. Knowledge also changes constantly. A model trained before 2022 will confidently cite outdated presidents, laws, or app settings. That staleness is itself a form of hallucination.

You could make the model extremely cautious so it answers only ultra-simple questions and says “I don’t know” to everything else—but then it becomes useless. Users expect the AI to try, and every attempt carries risk. The conclusion is clear: AI hallucinations cannot be eliminated completely. We can only minimize them and learn to work with them intelligently.

Engineering Methods to Minimize AI Hallucinations

Fortunately, several battle-tested techniques slash hallucination rates dramatically.

Retrieval-Augmented Generation (RAG) is the most powerful. Instead of relying on the model’s internal “memory,” you retrieve relevant documents from your own knowledge base (Wikipedia excerpts, internal docs, databases), feed them to the model along with the question, and instruct it strictly: “Answer using ONLY the information from these documents. If the answer is not there, say ‘Information not found.’ Do not add facts from your own knowledge.”

This approach cuts factual hallucinations by 70–90 %.

Controlling temperature and top-p forces the model to pick only the most probable tokens. Use temperature = 0.0–0.1 and top-p ≤ 0.9 for legal, medical, or financial tasks. Save higher creativity settings for idea generation and writing.

Chain-of-thought with self-verification adds a structured reasoning layer:

- Think step by step and write out your reasoning.

- Draft a preliminary answer.

- Check every fact against logic and common sense.

- Fix any errors.

- Give the final answer.

Add the crucial line: “If you are not 100 % sure, write ‘I am not certain, but presumably…’”

Explicit ban on invented sources removes the most seductive hallucination trigger. Tell the model: “Do NOT invent sources, articles, author names, or dates. If you need to reference a fact, say ‘According to my training data…’ Never use phrases like ‘research shows’ or ‘according to the article’.”

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks (Lewis et al.) – the foundational RAG research paper (embedded in “Engineering Methods to Minimize AI Hallucinations”)

Practical Strategies for Different AI Scenarios

Tailor your defense to the task.

Legal or medical consultations carry the highest risk. Use RAG over verified sources only, require human verification of every fact, and enforce the rule: “If it’s not in the documents, say ‘No information.’”

Writing code often produces hallucinations in the form of nonexistent libraries or APIs. The best defense is few-shot prompting with working code examples plus a clear rule: “Do NOT use any function or library unless you are certain it exists.”

Content generation (articles, posts) splits naturally into creative and factual parts. Let the model draft structure and prose, then have a human (or second model) fact-check. Prompt it to mark uncertain facts with an asterisk (*).

Customer-support chatbots must stay inside official documentation and FAQ. Use RAG, limit answers to the knowledge base, and escalate uncertain queries to a human immediately.

How to Spot AI Hallucinations Like a Pro

You can’t control the model, but you can train yourself to catch hallucinations before they cause damage.

Warning signs include overly specific numbers (“exactly 14,372 people”), invented expert names (“Professor John Smith from Harvard”), nonexistent links, contradictions with common sense, or suspiciously perfect smoothness with zero qualifiers like “probably,” “often,” or “in some cases.”

Use this quick detection algorithm:

- Ask the same question twice with different wording. Conflicting facts mean at least one hallucination.

- Ask the model to provide sources—if they are fabricated, you’ve caught it.

- Google the key facts yourself (or check Wikipedia and official sites).

- Run the question through a second model (Claude vs. GPT-4 vs. Llama) and compare answers.

To truly grasp the core reasons behind this phenomenon, it's fascinating to learn that these AI 'lies' are often a natural outcome of how large language models process information, as explained in LLM Hallucinations Explained: Why AI Makes Things Up (Compression Theory).

Conclusion: Living and Working Effectively with AI Hallucinations

Large language models are incredibly powerful yet deeply imperfect tools. They don’t “know” facts—they generate what sounds right. That is both their greatest strength (creativity, empathy, coherence) and their greatest weakness (AI hallucinations).

Before you integrate AI into any workflow, honestly ask yourself: “What is the cost of an error?”

- If the cost is high (law, medicine, aviation, finance), always use RAG, human verification, low temperature, and never trust the model blindly.

- If the cost is low (idea generation, drafts, entertainment), lean into the creativity and don’t fear hallucinations. Sometimes they even spark the most interesting breakthroughs.

AI hallucinations are here to stay, but with the right mindset and techniques you can turn them from a liability into a manageable part of your workflow.