Real-Time Translation Earbuds: How Google Is Turning Any Headphones into AI Translators

There’s something quietly transformative happening in the world of language technology. What used to feel like science fiction—understanding someone instantly in another language—is now becoming surprisingly accessible. And Google’s latest move might be one of the most practical steps in that direction.

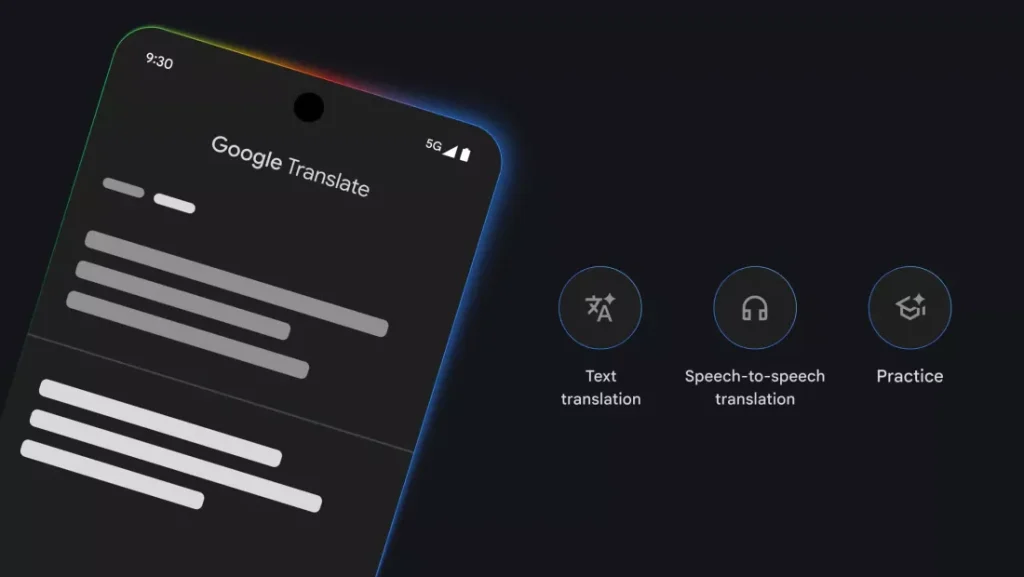

Instead of requiring specialized hardware, Google is effectively turning any pair of headphones into real-time translation earbuds, powered by its Gemini AI. It’s a subtle shift, but one that could change how people interact across languages on a daily basis.

While AI transforms how we understand languages through our headphones, its creative power is also reshaping the world of sound itself, with models like ACE-Step 1.5 bringing AI Music Generation Locally: ACE-Step 1.5 vs Suno Explained to your fingertips.

Table of Contents

A Quiet Shift in How We Communicate Across Languages

For years, translation tools have been improving in accuracy, but they’ve mostly lived on screens—typed phrases, delayed responses, slightly robotic outputs. Now, the focus is shifting toward something much more natural: real-time, spoken interaction.

Google’s latest update to its Translate app reflects exactly that. Rather than just converting text, it’s aiming to replicate the rhythm of real conversation—tone, emphasis, even cultural nuance.

This isn’t just about convenience. It’s about removing friction. Imagine listening to a conversation, a presentation, or even a TV show in a language you don’t speak—and understanding it instantly, without pausing or reading subtitles.

That’s the direction we’re heading.

How Google’s Real-Time Translation Actually Works

The core idea is surprisingly simple: open the Google Translate app, connect your Android device to your headphones, and let the AI handle the rest.

Once activated, the system can translate live audio from conversations, speeches, or media content directly into your ears. But what makes it interesting isn’t just the speed—it’s the quality of interpretation.

Gemini, Google’s AI model behind this feature, attempts to preserve the speaker’s delivery. That includes tone, cadence, and emphasis—details that traditional translation often ignores. It also goes a step further by localizing idioms instead of translating them word-for-word, which makes the output feel far more natural.

In practice, this means the translation doesn’t just sound correct—it sounds human.

Availability, Languages, and Early Rollout

Right now, the feature is still in beta, and access is somewhat limited. It’s currently available on Android devices in regions like the United States, Mexico, and India.

But even in this early stage, the scope is impressive. The system supports over 70 languages, including widely spoken ones like English, Spanish, Chinese, and Hindi, as well as others such as Ukrainian, Zulu, and Palestinian Arabic.

That breadth matters. Translation tools often struggle not because of accuracy, but because of limited language coverage. Google seems to be addressing that head-on.

Meanwhile, Gemini-powered text translation is also expanding, supporting around 20 languages across Android, iOS, and web platforms.

Support for iOS and additional regions is expected later, which suggests this is just the beginning rather than a finished rollout.

Google vs Apple: A Subtle but Important Difference

Apple isn’t sitting still in this space. With its latest OS update, it introduced live, in-ear translation as well—especially for devices supporting Apple Intelligence.

At a glance, the two approaches look similar. Both aim to deliver real-time audio translation directly through earbuds. But the differences start to appear when you look closer.

Apple’s implementation currently works with a limited set of AirPods models and supports fewer languages.

Google, on the other hand, takes a more open approach. By relying on software rather than tightly controlled hardware, it allows almost any headphones to function as translation devices. Combined with broader language support, this gives Google a noticeable edge in accessibility.

This difference likely reflects a deeper strategic contrast. Google has been investing heavily in Gemini and integrating it across its ecosystem, while Apple is still building out its AI infrastructure and often relies on external models.

It’s not just a feature comparison—it’s a glimpse into how each company approaches AI as a platform.

Beyond Translation: Learning and Language Practice

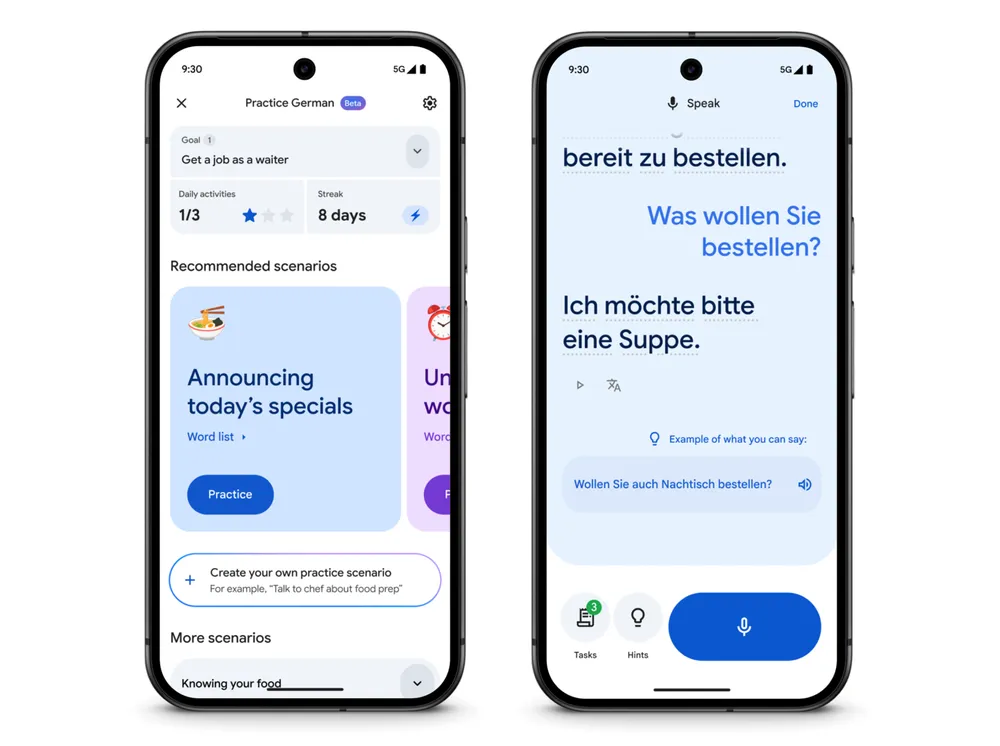

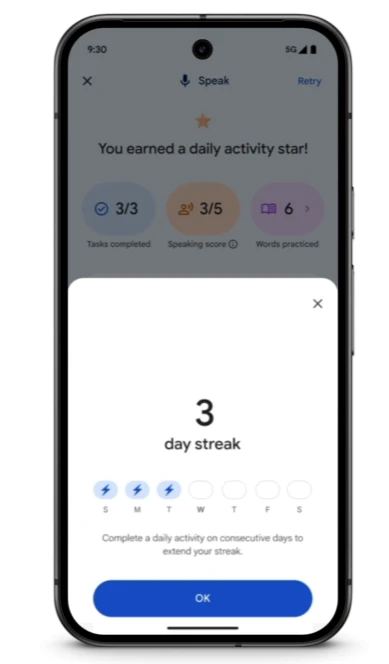

Interestingly, Google isn’t stopping at real-time translation. The Translate app itself is evolving into something closer to a learning tool.

Recent updates introduce features that resemble language-learning apps like Duolingo. Users can now practice languages, receive improved feedback, and track daily progress—all within the same ecosystem.

The expansion also includes new regions and additional language courses. For example, English speakers can now learn German and Portuguese, while English courses are available for speakers of languages like Bengali, Mandarin, and Italian.

This blending of translation and education feels intentional. Instead of just helping users understand other languages, Google is nudging them toward actually learning them.

Why This Matters More Than It Seems

At first glance, real-time translation earbuds might feel like just another tech feature—impressive, but niche. But the implications are much broader.

Language has always been one of the biggest barriers to global communication. Removing that barrier—even partially—changes how people travel, work, learn, and connect.

And what makes Google’s approach particularly interesting is its accessibility. You don’t need new hardware. You don’t need expensive devices. If you already have a smartphone and headphones, you’re essentially ready.

That lowers the barrier to entry dramatically.

We’re still in the early stages, of course. The technology isn’t perfect, and real-world conversations are messy, unpredictable, and full of nuance. But the direction is clear.

Real-time translation is no longer a distant goal. It’s becoming something people can actually use—and soon, something they might rely on without thinking twice.

Source: Google